Get in touch

Find key contacts for enquiries about funding, partnerships, collaborations and doctoral degrees.

Excellence and Integrity

Transforming Lives

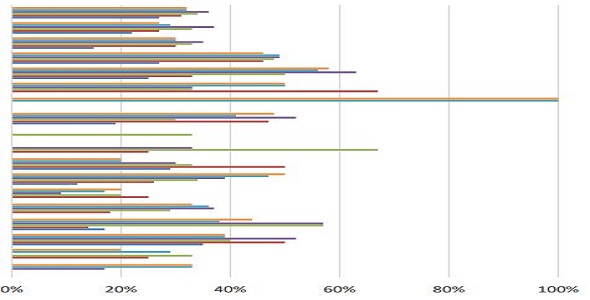

How we are delivering innovative, world-leading research with transformative impact

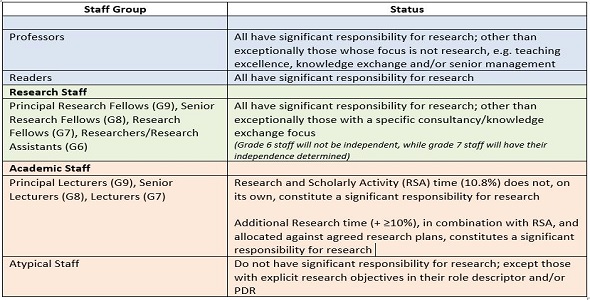

Research Excellence Framework

Ethics and Integrity

Get in touch

Find key contacts for enquiries about funding, partnerships, collaborations and doctoral degrees.